08.28.2025

Anthropic announced today that it is changing its Consumer Terms and Privacy Policy, with plans to train its AI chatbot Claude with user data. New users will be able to

New users will be able to opt out at signup. Existing users will receive a popup that allows them to opt out of Anthropic using their data for AI training purposes.

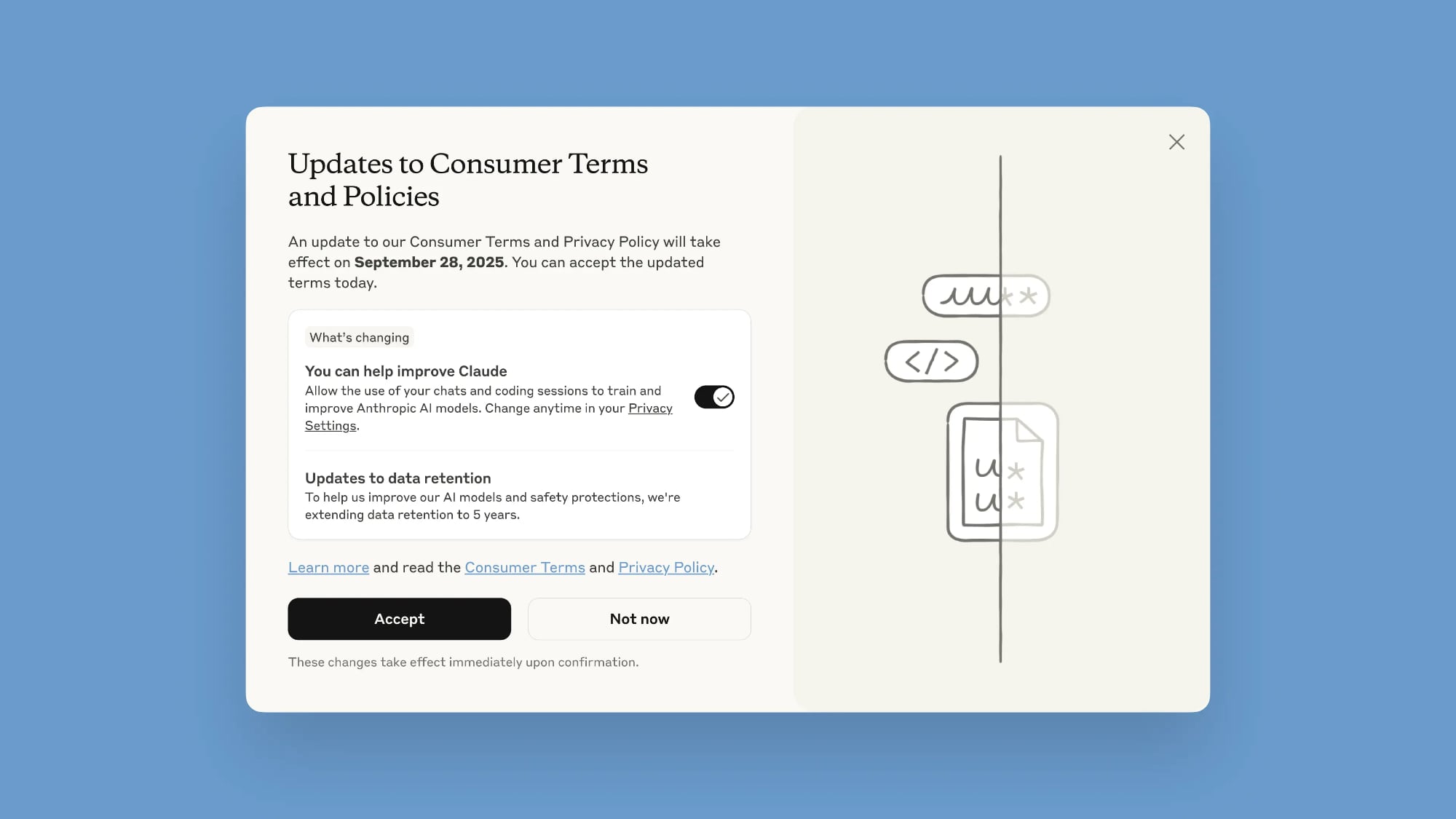

The popup is labeled "Updates to Consumer Terms and Policies," and when it shows up, unchecking the "You can help improve Claude" toggle will disallow the use of chats. Choosing to accept the policy now will allow all new or resumed chats to be used by Anthropic. Users will need to opt in or opt out by September 28, 2025, to continue using Claude.

Opting out can also be done by going to Claude's Settings, selecting the Privacy option, and toggling off "Help improve Claude."

Anthropic says that the new training policy will allow it to deliver "even more capable, useful AI models" and strengthen safeguards against harmful usage like scams and abuse. The updated terms apply to all users on Claude Free, Pro, and Max plans, but not to services under commercial terms like Claude for Work or Claude for Education.

In addition to using chat transcripts to train Claude, Anthropic is extending data retention to five years. So if you opt in to allowing Claude to be trained with your data, Anthropic will keep your information for a five year period. Deleted conversations will not be used for future model training, and for those that do not opt in to sharing data for training, Anthropic will continue keeping information for 30 days as it does now.

Anthropic says that a "combination of tools and automated processes" will be used to filter sensitive data, with no information provided to third-parties.

Prior to today, Anthropic did not use conversations and data from users to train or improve Claude, unless users submitted feedback.

Tag: Anthropic

This article, "Anthropic Will Now Train Claude on Your Chats, Here's How to Opt Out" first appeared on MacRumors.com

Discuss this article in our forums

You may also be interested in this

In major reversal, Apple …

06.30.2025

Apple is exploring the use of AI technology from Anthropic PBC or OpenAI for a new Siri version, tacitly admitting its in-house AI models have underperformed. This potential blockbuster shift

Claude 4 Debuts with Two …

05.22.2025

AI company Anthropic today announced the launch of two new Claude models, Claude Opus 4 and Claude Sonnet 4. Anthropic says that the models set "new standards for coding, advanced

Amazon to Launch New AI-P…

08.30.2024

Amazon is set to release a revamped version of its Alexa voice assistant this October that will be powered by AI models from Anthropic's Claude, rather than Amazon's in-house AI

Artificial Intelligence l…

05.31.2023

Chief executive officers of some of the leading companies in artificial intelligence, including OpenAI, Alphabet Inc.’s DeepMind, and Anthropic, have joined a growing chorus of leaders warning about the potential

Apple is looking at refoc…

05.07.2025

Apple is exploring a revamp of its Safari browser, emphasizing AI-driven search engines, as its Google partnership faces potential disruption and industry trends evolve. : Apple Inc. is “actively

President Trump takes aim…

07.24.2025

U.S. President Donald Trump President Donald Trump’s AI Action Plan aims to bolster U.S. leadership in artificial intelligence by unleashing American companies to innovate swiftly. The 23-page strategy emphasizes slashing

After in-house AI effort …

08.30.2024

Amazon has been working on developing new version of Siri competitor Alexa powered by generative AI, dubbed “Remarkable Alexa,” as the massive digital retailer hopes to charge for its use,

OpenAI employees warn of …

06.04.2024

Still from “Terminator 2: Judgment Day” A Right to Warn about Advanced Artificial Intelligence: We are current and former employees at frontier AI companies, and we believe in the